Terraform Test Capabilities

The test subcommand has been introduced in the latest release of Terraform (v1.6.0), deprecating the experimental test command. Terraform tests are written in .tftest.hcl files, containing a series of run blocks. Each run block executes a Terraform plan and optional apply against the Terraform configuration under test and can check conditions against the resulting plan and state.

In this article we’ll explore Terraform’s builtin testing capabilities with a focus on it’s new v1.6.0 Terraform Test Framework(now GA, previously experimental).

Introduction

Ensuring deployments are successful and identifying any errors or changes that may occur in our infrastructure, it’s paramount to the level of confidence we can have in our deployments. By utilizing testing strategies we can guarantee that our approach has been thoroughly tested and is ready for production.

Terraform offers many testing capabilities to ensure that your infrastructure is correct. These capabilities can be divided into two distinct categories:

- validating your configuration and infrastructure as part of your regular Terraform operations

- performing traditional unit and integration testing on your Terraform code

The official documentation has tons of information on Custom Conditions and Checks, so you can learn more about the first testing capability. If you are looking for information on a Check blocks in particular, I recommend you explore these articles on #unfriendlygrinch as well: Terraform check{} Block and Terraform check{} Block (Continued).

Let’s continue to talk about the no longer experimental test command, starting with a bit of history.

Brief history

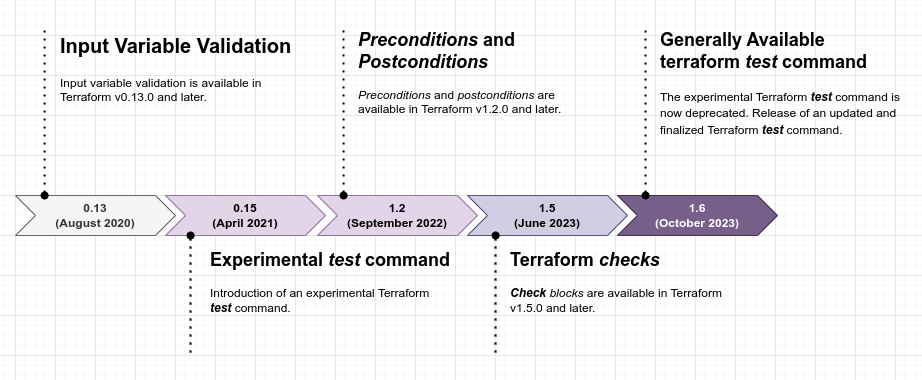

To ensure that the outcome of Terraform deployments are of high quality, a number of testing capabilities have been introduced in the following versions:

- Input Variable Validation introduced in Terraform v0.13.0

- Terraform v0.15.0 introduced an experimental Terraform test command.

- Terraform v1.2.0 introduced Pre and Post-conditions.

- Terraform Checks were introduced in v1.5.0.

- With the release of Terraform v1.6.0, the experimental Terraform test command has been deprecated and replaced with an updated and finalized command.

To really grasp the timeline, I suggest visualizing it in the form of a diagram:

Testing capabilities for Terraform

As I’ve already mentioned, testing capabilities for Terraform come in two distinct categories.

- The first is configuration and infrastructure validation, which is, in my view, a vital part of regular Terraform operations.

- The second is more traditional unit and integration testing of the configuration.

Both these categories of testing are essential for ensuring that Terraform operations are running as expected and that any resulting changes are secure and adhere to desired standards. They includes testing the syntax, the behavior of the configuration, and the changes that can occur from running different operations.

The first capability includes custom conditions and check blocks, whereas the second is provided by the new Terraform test subcommand.

Custom conditions use cases

When selecting the right custom conditions for your Terraform use case, it is important to understand the differences between the available options.

Generally speaking, check blocks with assertions should be used to validate your infrastructure as a whole, as these blocks don’t impede the overall execution of operations. Meanwhile, validation conditions (Input Variable Validation) or output preconditions, should be used to ensure that the configuration’s inputs and outputs satisfy specific criteria. Last but not least, the lifecycle block inside a resource or data block can include both precondition and postcondition blocks. They can be used to validate that Terraform is producing the desired configuration results.

About Preconditions and Postconditions

Preconditions and postconditions have been available in Terraform since v1.2.0. One can use precondition and postcondition blocks to create custom rules for resources, data sources, and outputs. As a general rule, Terraform checks a precondition before evaluating the object it is associated with and checks a postcondition after evaluating the object. Please keep in mind that Terraform evaluates custom conditions as early as possible, but must defer conditions that depend on unknown values until the apply phase.

Often forgotten when talking about validation per se, the Terraform conditional is a way of using the value of a boolean expression to select one of two values. The condition can be any expression that resolves to a boolean value, but will usually be an expression that uses the equality, comparison or logical operators.

The syntax of a conditional expression is as follows: condition ? true_value : false_value.

The Terraform configuration language is a powerful enough and a comprehensive tool, allowing users to express highly complex operations to generate values efficiently. This flexibility provides users with the ability to customize their infrastructure configuration exactly to their specifications, enabling them to create an infrastructure that is tailored precisely to their needs.

We often see locals block used to create a resource name based on different conditional values. It can also be used to capture the names of resources in a map of resource tags. For example, straight from Terraform’s documentation:

resource "random_id" "id" {

byte_length = 8

}

locals {

name = (var.name != "" ? var.name : random_id.id.hex)

owner = var.team

common_tags = {

Owner = local.owner

Name = local.name

}

}

This type of functionality is quite convenient because it gives us the ability to easily reference and manipulate resources within a cloud infrastructure. As mentioned, the syntax of a conditional expression itself is rather simple. First we define the condition, then the outcomes for true and false evaluations.

In the above example, if var.name is not empty, checked by using the (in)equality operator != to match "", local.name is set to the var.name value. Otherwise, the name becomes the generated random_id.

Conditions Checked Only During Apply

We know that Terraform evaluates custom conditions as early as possible. We also know that input variable validations are only applicable to the variable value and are evaluated by Terraform immediately. Check assertions, preconditions, and postconditions, on the other hand, depend on Terraform evaluating whether the value(s) associated with the condition are known before or after applying the configuration.

Looking at the documentation we can extract the following:

- Known before apply: Terraform checks the condition during the planning phase. For example, Terraform can know the value of an image ID during planning as long as it is not generated from another resource.

- Known after apply: Terraform delays checking that condition until the apply phase. For example, AWS only assigns the root volume ID when it starts an EC2 instance, so Terraform cannot know this value until apply.

When applying Terraform configurations, a failed precondition prevents Terraform from carrying out any planned actions for the associated resource. However, a failed postcondition will stop processing after Terraform has already taken the respective actions. Those actions will not be reversed, but it will prevent any further downstream actions that rely on the resource. This is because Terraform typically has less information when creating the initial configuration than when applying subsequent changes. Thus, Terraform will check for conditions during apply during initial creation and again during planning for any subsequent updates.

Preconditions can be used to complement input variable validation blocks and offer a balanced alternative to input validation, allowing terraformers to take preventative measures against undesirable output values. Through preconditions, Terraform can be prevented from storing an invalid output value, while simultaneously preserving a valid output value from the previous run when applicable. Prior to evaluating the value expression to finalize the result, Terraform evaluates the preconditions, allowing them to take precedence over any potential errors in the value expression. This offers a powerful tool to ensure the expected values and outputs are received.

Let’s take a look at the azurerm_key_vault resource. As usual, we have a set of arguments which are supported. Imagine we need to create a module to manage Azure Key Vault, so we can package and reuse our resource configurations with Terraform. By using preconditions along with variable validation, it is possible to ensure the integrity of both the inputs and outputs of the module’s operations.

One of the arguments refers to Access Policies which are basically fine grained control policies over access to the Vault items. The policy can be configured both inline and via the separate azurerm_key_vault_access_policy resource, thus for inline configuration we can use the following variable definition:

variable "access_policy" {

type = list(object({

tenant_id = optional(string)

object_id = optional(string)

application_id = optional(string)

certificate_permissions = optional(list(string), [])

key_permissions = optional(list(string), [])

secret_permissions = optional(list(string), [])

storage_permissions = optional(list(string), [])

}))

default = null

validation {

condition = can([for item in var.access_policy : length(setsubtract(

item.certificate_permissions,

["Backup", "Create", "Delete", "DeleteIssuers", "Get", "GetIssuers", "Import", "List", "ListIssuers", "ManageContacts", "ManageIssuers", "Purge", "Recover", "Restore", "SetIssuers", "Update"],

)) == 0])

error_message = "The certificate_permissions items contain non-valid value(s)."

}

validation {

condition = can([for item in var.access_policy : length(setsubtract(

item.key_permissions,

["Backup", "Create", "Decrypt", "Delete", "Encrypt", "Get", "Import", "List", "Purge", "Recover", "Restore", "Sign", "UnwrapKey", "Update", "Verify", "WrapKey", "Release", "Rotate", "GetRotationPolicy", "SetRotationPolicy"],

)) == 0])

error_message = "The key_permissions items contain non-valid value(s)."

}

validation {

condition = can([for item in var.access_policy : length(setsubtract(

item.secret_permissions,

["Backup", "Delete", "Get", "List", "Purge", "Recover", "Restore", "Set"],

)) == 0])

error_message = "The secret_permissions items contain non-valid value(s)."

}

validation {

condition = can([for item in var.access_policy : length(setsubtract(

item.storage_permissions,

["Backup", "Delete", "DeleteSAS", "Get", "GetSAS", "List", "ListSAS", "Purge", "Recover", "RegenerateKey", "Restore", "Set", "SetSAS", "Update"],

)) == 0])

error_message = "The storage_permissions items contain non-valid value(s)."

}

}

Notice the validation blocks within the variable block which specify custom conditions. Also, the code creating the resource would look like this:

resource "azurerm_key_vault" "example" {

name = "examplekeyvault"

location = azurerm_resource_group.example.location

resource_group_name = azurerm_resource_group.example.name

enabled_for_disk_encryption = true

tenant_id = data.azurerm_client_config.current.tenant_id

soft_delete_retention_days = 7

purge_protection_enabled = false

sku_name = "standard"

# ... (other azure_key_vault arguments) ...

dynamic "access_policy" {

for_each = var.access_policy != null ? var.access_policy : []

content {

tenant_id = data.azurerm_client_config.this.tenant_id

object_id = access_policy.value["object_id"]

certificate_permissions = access_policy.value["certificate_permissions"]

key_permissions = access_policy.value["key_permissions"]

secret_permissions = access_policy.value["secret_permissions"]

storage_permissions = access_policy.value["storage_permissions"]

}

}

timeouts {

create = "30m"

read = "5m"

update = "30m"

delete = "30m"

}

lifecycle {

precondition {

condition = length(var.access_policy) <= 1024

error_message = "Up to 1024 objects describing access policies are allowed."

}

}

}

The above example uses a precondition to validate we have up to 1024 objects describing access policies.

On to postconditions, for the module we imagined, it is important to verify that the location is either “northeurope” or “westeurope”. Adding a postcondition is an effective way to do this. Integrating a validation into the variable could also produce the desired outcome, however this approach might be difficult to follow considering the use of modules. Ultimately, adding a postcondition will ensures that the location is set correctly:

lifecycle {

postcondition {

condition = contains(["northeurope", "westeurope"], azurerm_resource_group.example.location)

error_message = "Location should be either northeurope or westeurope!"

}

}

Checks with Assertions

With Check blocks we can validate our infrastructure outside the usual resource lifecycle. To get a specified secret from our newly created Key Vault:

check "health_check" {

data "http" "my_key_vault" {

url = azurerm_key_vault.example.vault_uri

}

assert {

condition = data.http.my_key_vault.status_code == 401

error_message = "${data.http.my_key_vault.url} returned an unhealthy status code"

}

}

This operation requires the secrets/get permission which we haven’t configured. And without using any mechanism to authenticate, our API call should return a 401 status code, which means that the request is unauthenticated for Key Vault.

Further to my previous comments, you can find more on check blocks by exploring #unfriendlygrinch: Terraform check{} Block and Terraform check{} Block (Continued).

The new Test Framework

Terraform 1.6 is now generally available! This version contains a whole host of features, but the most exciting of all is the powerful new testing framework which helps authors to validate their code with systematic tests.

The anatomy of a test is quite simple. All tests for Terraform are placed in individual test files. These test files are discovered by Terraform through the file extension: either .tftest.hcl or .tftest.json. The root level attributes and blocks:

- zero or one variable block providing test values

- one or more provider blocks

- one or more run blocks

Terraform executes run blocks in order, meaning that it behaves as though a series of commands have been executed directly within the configuration directory. The order of the variables and provider blocks doesn’t matter, as Terraform will process all the values within these blocks at the beginning of the test operations. Hashicorp does recommend defining your variables and provider blocks first, at the beginning of the test file.

Run blocks

In Terraform, tests create real infrastructure by default and run assertions and validations against that infrastructure, making it analogous to integration testing. However, this behavior can be modified by updating the command attribute within a run block. By default it is set to command = apply and instructs Terraform to execute a complete apply operation against the configuration. If we replace this value with command = plan, it instructs Terraform not to create new infrastructure for that particular run block, allowing test authors to validate logical operations and custom conditions within their infrastructure, a process that is analogous to unit testing.

Each run block has fields and blocks fully described here:

- The

commandattribute andplan_optionsblock are critical components to the Terraform workflow. If these specific parameters are not specified, the default operation set in place is a standard Terraformapplyoperation. - Terraform

runblock assertions are Custom Conditions, consisting of a condition and an error message. Upon the completion of a Terraformtestcommand, Terraform will render the overall results by indicating whether the tests passed or failed. This is accomplished by providing a detailed account of all failed assertions, if any were identified. - The test file syntax supports variables blocks at both the root level and within run blocks, therefore you can override variable values for a particular run block with values provided directly within that run block.

- If needed, you can also set or override the required providers within the main configuration from your testing files by using

providerandprovidersblocks and attributes. - Terraform tests the configuration within the directory you execute the terraform test command from (you can change the target you point to with the

-chdirargument). Eachrunblock also allows the user to change the targeted configuration using themoduleblock. Unlike the traditionalmoduleblock, themoduleblock within test files only supports thesourceattribute and theversionattribute. Please also take note, that Terraform test files only supportlocalandregistrymodules within thesourceattribute. The use cases for themodulesblock within a testing file are to either add the infrastructure the main configuration requires for testing or to load and validate secondary infrastructure (such as data sources) that are not created directly by the main configuration being tested. - By default, if any Custom Conditions or check block assertions fail during the execution of a Terraform test file, the overall command will report the test as a failure. However, to test potential failure cases, Terraform supports the

expect_failuresattribute. This attribute can be included in each run block and provides a list of checkable objects, such as resources, data sources, check blocks, input variables, and outputs. If these checkable objects report an issue, the test passes, and the test fails overall if they do not.

Examples

Continuing our azurerm_key_vault journey, let’s create some tests. Out final local tree will look like this:

$ tree

.

├── locals.tf

├── main.tf

├── modules

│ ├── keyvault

│ │ ├── locals.tf

│ │ ├── main.tf

│ │ ├── outputs.tf

│ │ ├── variables.tf

│ │ └── versions.tf

│ └── rg

│ ├── locals.tf

│ ├── main.tf

│ ├── outputs.tf

│ ├── variables.tf

│ └── versions.tf

├── outputs.tf

├── terraform.log

├── terraform.tfvars

├── tests

│ ├── 1_rg.tftest.hcl

│ └── 2_kv.tftest.hcl

└── variables.tf

4 directories, 18 files

Even if our module has some input validation, we want to make sure they work the way we expect them to. In our test folder let’s create a test file named 1_rg.tftest.hcl:

variables {

resource_groups = [

{

name = "testframework"

location = "North Europe"

count = 1

additional_tags = {

1 = {

description = "Test Framework Resource Group in NorthEurope."

}

}

}

]

}

provider "azurerm" {

features {}

}

run "valid_resource_group_name" {

command = plan

assert {

condition = length(module.rg["testframework.NorthEurope"].resource_group_names[0]) >= 1 && length(module.rg["testframework.NorthEurope"].resource_group_names[0]) <= 90 && can(regex("^[\\w\\(\\)\\-\\.]+", module.rg["testframework.NorthEurope"].resource_group_names[0])) && !endswith(module.rg["testframework.NorthEurope"].resource_group_names[0], ".")

error_message = <<EOT

Err: Resource Group Name must meet the following requirements:

Between 1 and 90 characters long.

Alphanumerics, underscores, parentheses, hyphens, periods.

Can't end with period.

Resource Group names must be unique within a subscription.

EOT

}

}

We know that the resourcegroup entity must respect the naming rules and restrictions for Azure resources:

- Must not exceed maximum length of ‘90’ characters.

- Underscores, hyphens, periods, parentheses, and letters or digits as defined by the Char.IsLetterOrDigit function.

- Valid characters are members of the following categories in UnicodeCategory: UppercaseLetter, LowercaseLetter, TitlecaseLetter, ModifierLetter, OtherLetter, DecimalDigitNumber.

- Can’t end with period.

The above condition will check the name against those very limitations, using the output passed from the rg module(resource_group_names). We need to start with a terraform init and then run the terraform test command:

$ tf init

Initializing the backend...

Initializing modules...

Initializing provider plugins...

- Reusing previous version of hashicorp/azurerm from the dependency lock file

- Using previously-installed hashicorp/azurerm v3.75.0

Terraform has been successfully initialized!

You may now begin working with Terraform. Try running "terraform plan" to see

any changes that are required for your infrastructure. All Terraform commands

should now work.

If you ever set or change modules or backend configuration for Terraform,

rerun this command to reinitialize your working directory. If you forget, other

commands will detect it and remind you to do so if necessary.

$ terraform test

tests/1_rg.tftest.hcl... in progress

run "valid_resource_group_name"... pass

tests/1_rg.tftest.hcl... tearing down

tests/1_rg.tftest.hcl... pass

Success! 1 passed, 0 failed.

By overriding the resource_groups variable we can specify an invalid name. We can do this with values provided directly within that run block:

# ... (other arguments) ...

run "valid_resource_group_name" {

command = plan

variables {

resource_groups = [

{

name = "testframework."

location = "North Europe"

}

]

}

# ... (other arguments) ...

}

If we run the test again, it will fail:

$ terraform test

tests/1_rg.tftest.hcl... in progress

run "valid_resource_group_name"... fail

╷

│ Error: Invalid value for variable

│

│ on main.tf line 28, in module "rg":

│ 28: name = each.value.name

│ ├────────────────

│ │ var.name is "testframework."

│

│ Err: Resource Group Name must meet the following requirements:

│

│ Between 1 and 90 characters long.

│ Alphanumerics, underscores, parentheses, hyphens, periods.

│ Can't end with period.

│ Resource Group names must be unique within a subscription.

│

│ This was checked by the validation rule at modules/rg/variables.tf:11,3-13.

╵

tests/1_rg.tftest.hcl... tearing down

tests/1_rg.tftest.hcl... fail

Failure! 0 passed, 1 failed.

Now let’s do an end to end integration test and refer to the Resource Group name generated in the setup step as input for a Key Vault resource_group_name. We will add a new test file named 2_kv.tftest.hcl:

provider "azurerm" {

features {}

}

run "setup_rg" {

command = apply

variables {

name = "testframework"

location = "North Europe"

}

module {

source = "./modules/rg"

}

}

run "public_network_access" {

command = plan

variables {

keyvaults = [

{

name = "tstfrmkv"

resource_group_name = run.setup_rg.resource_group_names[0]

sku_name = "standard"

public_network_access_enabled = true

}

]

}

assert {

condition = module.kv["tstfrmkv"].kv_public_access == true

error_message = "Public access should be enabled!"

}

We can now witness how runs can be chained together and refer to each other’s outputs:

$ tf test

tests/2_kv.tftest.hcl... in progress

run "setup_rg"... pass

run "public_network_access"... pass

tests/2_kv.tftest.hcl... tearing down

tests/2_kv.tftest.hcl... pass

Success! 2 passed, 0 failed.

We should also address the use cases for the new test command. The purpose of a use case is varied, providing an effective means of managing scope, establishing requirements, and visualizing system architecture. It is a powerful tool for communication between technical and business stakeholders, providing a clearer understanding of system objectives and enabling more informed risk management. Before we can dive into the use cases, let’s take a moment to look at the consumer-producer infrastructure model.

The producer-consumer infrastructure model

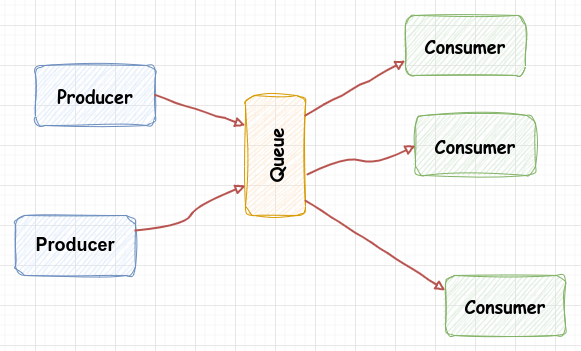

The Producer-Consumer pattern is an effective way to separate work that needs to be done from the execution of the said work. This pattern consists of two major components that are usually connected by a queue, allowing for the decoupling of the work item from its execution.

We have the Producer which can place items of work on the queue to be processed later, without having to worry about how the work will be processed, or how many consumers or producers are involved in the mix.

Likewise, the Consumer does not need to worry about the source of the work item, but simply needs to remove the item from the queue and process it. This ‘fire and forget’ approach makes the Producer-Consumer pattern an ideal choice for many workflow scenarios and the same applies to the infrastructure realm.

A simple workflow involving both producers and consumers will look like this:

Some time ago, HashiCorp published a nice post digging more into the producer-consumer model related to infrastructure.

Infrastructure producers are the individuals responsible for creating the infrastructure that others will use. These producers can be SREs, platform engineers, etc. and their role is to determine the most efficient way to provide and maintain the necessary resources. This includes the provisioning of onprem/cloud services so that multiple teams can use them in a consistent manner, and the overseeing of these resources during their entire lifespan.

Infrastructure consumers are the people responsible for building or deploying applications. They might be developers who are mainly concerned with deploying applications, but not overly concerned with the inner workings of the infrastructure. Their primary objective is deploying applications, thus their focus is to have easy access to the necessary components.

As the two main stakeholders in the infrastructure provisioning process, these two personas have different requirements and responsibilities. At the same time, tight collaboration between the two groups is integral to an optimal DevOps process.

Terraform testing use cases

By outlining the ways in which users will interact with the system, use cases provide a comprehensive overview of system architecture, detailing the context, pre-requisite conditions, and post-conditions of the system. In sum, Terraform tests are a powerful tool for managing scope, while establishing and ensuring requirements.

Given the above description of the producer-consumer infrastructure model, we can assess the following:

- Input Validation, conditions and checks are consumer centric meaning they are part of the provisioning lifecycle. These errors are relevant to the consumer in the sense that they can take action to prevent them from occurring.

- Terraform tests (available since v1.6.0) are producer centric. They are not exposed to the consumer and can be separated from the provisioning lifecycle. Their true purpose is to validate behaviour during development of custom, reusable Terraform modules.

Using the new test command we can do unit testing to model different combination of input values, to validate our logic or test things that should fail.

In case we need to test things known only after an apply, like verifying expected values for calculated attributes and outputs, the new testing framework can also provide integration testing. Testing new provider or Terraform versions should also be considered at this level.

Conclusion

Testing is an essential part of ensuring that your Terraform modules and configuration are functioning as intended prior to deployment. Depending on your system’s cost and complexity, there are a variety of testing strategies that can be employed. In this article, we explored the different types of tests available and how they can be applied to catch errors in Terraform configuration before production. By leveraging the right testing approach, organizations can ensure that their Terraform deployments are reliable and secure, allowing them to easily integrate test into any workflow.

Please check out additional resources on practices and patterns for testing Terraform, in the next section.